What is Anomaly Detection?

The image above was drawn by an A.I. when we tasked it with creating artwork for the words “Anomaly Detection.”

17,000 British men reported being pregnant between 2009 and 2010, according to the Washington Post. These British men sought care for pregnancy-related issues like obstetric checkups and maternity care services. However, this wasn’t due to a breakthrough in modern medicine! Someone entered the medical codes into the country’s healthcare system incorrectly. Simply put, the data was recorded poorly, and there was no quality check to spot the error!

It’s hard to blame Britain’s healthcare services. Quality, by definition, is subjective. Creating quality checks for every possible variety of incorrect data is a massive feat. It’s hard to expect even the most data-mature companies to anticipate every mistake. However, if there was a way to employ AI or ML (machine learning), these solutions could learn from our datasets independently. They could spot errors like this without us having to explicitly say, “if entry is male, then don’t provide maternity care.”

Actually, there is. It’s called anomaly detection.

What is a data quality check?

Before we can get into the marvels of Anomaly detection, we have to understand what a data quality check is (and how it works).

A data quality check specifies standards for data’s dimensions, i.e., its completeness, validity, timeliness, uniqueness, accuracy, and consistency. Data either fails or satisfies those standards, revealing information about its quality (is it high or low).

Learn more about what data quality is and why it’s important in our ultimate guide!

Data quality rules will specify what the user defines as high-quality data. For example, a hospital might define a senior patient as over the age of 60. A simple data quality rule could have the following form:

Rule: Senior Patient Age > 60

In reality, each hospital might have different standards for defining a senior patient. Thus, they might define these rules differently. This way, a company can define various rules to identify problematic data. These rules are then added to a “rules library” and used during data quality monitoring to identify low-quality entries.

Once your company populates this rules library, you’ll develop a standard or “usual behavior” that you expect your data to adhere to. Data that doesn’t stick to those standards is invalid, incomplete, inaccurate, etc.

For example, in our senior patient rules above, if one applicant’s age is 35 and a user labels them as a “senior patient,” this data point would be invalid.

What is anomaly detection?

Anomaly detection (also referred to as outlier detection) involves identifying data observations, events, or data points that are different from what’s considered normal (called “anomalies”).

Instead of DQ rules, it uses ML/AI to scan your data for any unusual or unexpected results compared to the rest of the data set. Once it learns how your data system works, it can automatically detect anomalies that don’t fit the norm (or fall outside of these patterns) and flag the entries to alert the relevant parties. The values that don’t fit these standards are called “anomalies.”

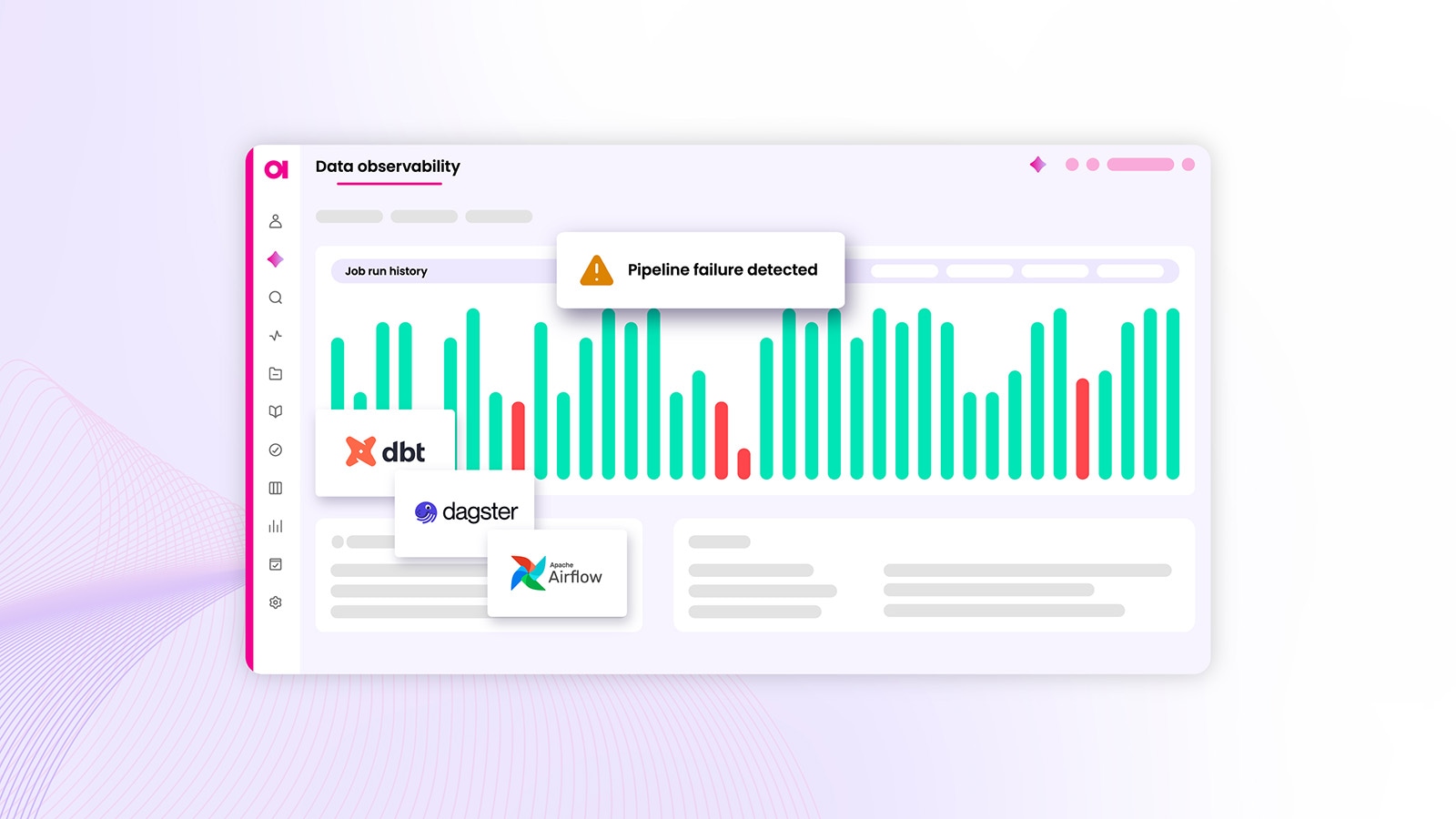

Once you receive an alert about an anomaly, you will find information on why the anomaly detection service labeled that entry anomalous. For example, suppose a hospital recorded having 10,000 patients in February, and the healthcare system receives an alert, flagging this entry as anomalous. It would explain via context in the dataset: this hospital usually has around 1,000 patients per month. This sudden jump was unexpected (or displayed as a graph that conveys this information).

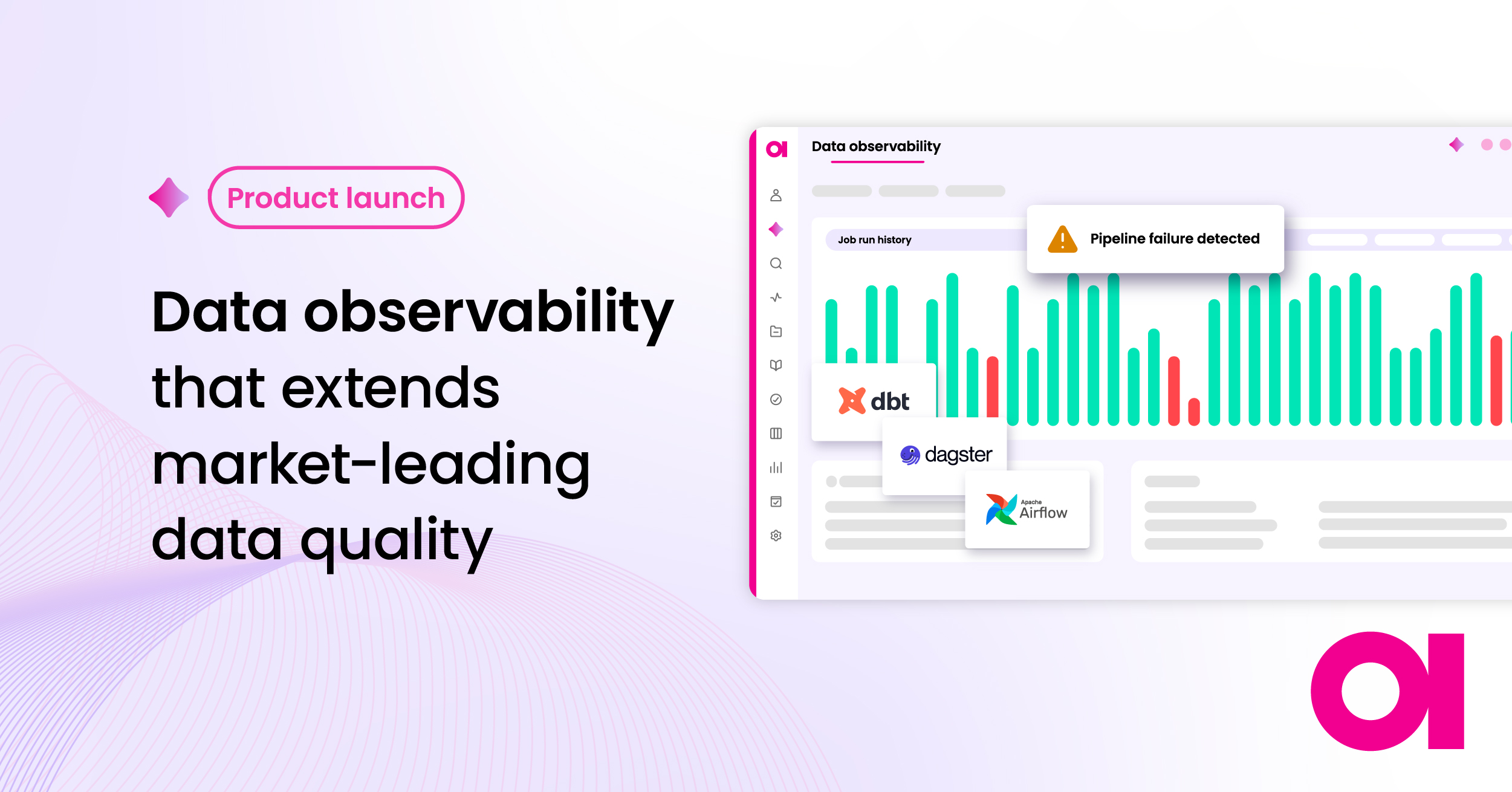

You can then take that information and decide whether it’s an anomaly or a normal data point. Maybe that hospital had a big jump in patients due to COVID-19. Depending on how you respond, some anomaly detection algorithms can learn from this feedback and become more accurate in detecting anomalies in the future. Anomaly detection is a fundamental part of any automated data quality workflow. For example, data observability (see how it looks here).

In our introductory hospital example above, let’s say that all people applying for pregnancy-related services were labeled with the tag “PREG.” If the vast majority of patients using those services had an “F” (female) in the gender column, anomaly detection would notice right away if a patient who was “M” (male) received the “PREG” tag. You wouldn’t need to write the rule “PREG must be F” to prevent this mistake from happening.

What are the different types of anomaly detection methods?

Various business personas have different ways of defining anomalies in their data therefore, there can be several anomaly detection methods applied.

The marketing team might get an abnormal amount of webinar registrations, receive more inbound requests from domains in one company than they usually do, or have too many requests from one country (more than the norm). These anomalies can affect their work performance and would be labeled as critical.

Data engineers might be more interested in conflicting information about the same entity (like a customer) in two different systems.

A data scientist might see average sales numbers for a random Thursday in February. However, that Thursday was a public holiday when sales are expected to triple. This is for sure a critical anomaly as well!

Therefore, you could say that anomaly definition and, consequently, anomaly detection are rather subjective. The important part to remember is that anomaly detection services must be capable of detecting all forms of abnormalities.

At Ataccama, we like to define anomalies based on their proximity to the data. Moving from high-level (far from the actual data, more general info about the data itself) to low-level (anomalies in the columns of data, row by row, specific values/data points), we can define anomalies in three categories:

- metadata

- transactional data

- record data

Metadata anomalies

Metadata is data that uses metrics to describe actual underlying data. For example, data quality metadata refers to information about the quality of data resources (databases, data lakes, etc.). Metadata management allows you to organize and understand your data in a way that is uniquely meaningful to your use case, keeping it consistent and accurate as well.

Anomalies at this level deal with data “in general” and are the farthest in proximity to the data itself. This anomaly detection method identifies anomalies about the data, not in the data (however, they can still signify a problem in the data).

Detecting anomalies can occur when there’s an unexpected drop in data quality – when a dataset/point is usually labeled one way but has been tagged in another. Or a certain number of records is missing, too low, or too high, and any other unexpected occurrence when extracting data about the data you have stored.

Transactional data anomalies

Moving on from metadata and closer to specific data, we arrive at the middle-level anomaly detection method – transactional data. We call this the middle level because you are dealing with values from the actual data but through an aggregated lens (i.e., every five days or every five minutes).

Transactional Data usually contains some form of monetary transaction because the ability to analyze such data can be very beneficial. For example, if you had an aggregation of sales for every five minutes, you could use it to determine the busiest hour, if it’s worth staying open past 8 p.m, etc.

Anomalies occurring at this level could be an unexpected increase in sales during one of the slower weeks of the year, a shopping holiday receiving similar totals as a typical day of the week, or one branch’s performance dipping unusually low in a busy month, etc.

Record-level anomalies

At the record level, this anomaly detection method flags specific values that are suspicious in the dataset. These values could be labeled as anomalous if one of the data points is missing, incomplete, inconsistent, or incorrect.

Our intro is an excellent example of a record-level anomaly. One of the values in the dataset (the gender) was unexpected and didn’t coordinate with other values from the system. This is just one row of information, a part of a bigger set that could contain the patient’s age, previous conditions, height, weight, etc.

Anomaly detection at the record level explores the dataset row by row in each table and column, looking for any inconsistencies. It can reveal issues in data collection, aggregation, or handling.

What are the two types of time series anomaly detection?

Now that we understand the different types of anomalies, we can get into the different approaches to detecting them – they are called Time Series Anomaly Detection.

One approach focuses on time as the primary context of the data, while the other focuses on finding anomalies in the context of normal behavior. These two types of anomaly detection are called time-dependent and time-independent.

Time-dependent anomaly detection

Time-dependent data evolves over time (think about our example of transactional data), so it’s important to know when a value is captured, when it was inputted, in what order multiple entries arrived, etc. Usually, users will group (aggregate) such data together (for example, hourly or daily) and look for anomalies or trends on a group level, spotting outliers contextually.

For example, when you have daily data (i.e., recorded once per day), you can expect some seasonality. In other words, Mondays’ expected value might differ from Tuesdays.’ Therefore, different values might be anomalous on different days.

Furthermore, such data often changes throughout longer periods. This can be expressed by the trend of the data or by a drift change in the data. All these patterns need to be captured by the time-dependent anomaly detection algorithm.

Here we see how an anomaly detection service would spot and flag a time-dependent anomaly. This type of time series anomaly detection contains seasonal, trend, and residual components.

Time-independent anomaly detection

The second time series anomaly detection is any data without the time dimension or “time-independent.” In other words, it’s unimportant when the data was created, inputted into the system, what order it arrived in, etc. Only the actual values matter.

Therefore the algorithm only needs to learn what the expected values are or, better, put them into “clusters of normality.”

These anomalies are more relevant for master data management (as opposed to transactional data): customer records, product data, reference data, and other “static data.”

Here we see how an anomaly detection service would spot and flag a time-independent anomaly. It identifies “clusters of normality” (in blue) among multiple datasets.

What are the essential features of detecting anomalies?

When investing in an anomaly detection service (or a platform with anomaly detection capabilities), there are four requirements to keep in mind:

- Extensive

- Self-driving

- Holistic

- Explainable

In the following figure, you can see these characteristics with a detailed explanation below.

Extensive

Users often need a versatile tool that covers a wide variety of data. Anomaly detection algorithms should work reasonably well on very different data, such as few data points, many data points, near-constant data, high variance data, many anomalies, rare anomalies, and containing changepoints (drifts).

Self-Service

The AI anomaly detection tool should also be usable by many people with different roles in their organizations (with varying levels of technicality). Users employ anomaly detection services to reduce their workload – not to add an extra burden! Therefore, the configurability of the algorithm should be minimal so that users don’t get too distracted or discouraged before they even start analyzing the data.

Holistic

Users usually need AI anomaly detection to cover the majority, if not all, of their data pipeline. They might have trouble with anomalies in transaction data, metadata, record level, or all three. Additionally, their needs may change over time. They need a complete solution that is scalable to their changing demands. At high to low levels, you need to recognize anomalies as close to or as far from the data as you wish.

Explainable

People love AI because it does part of their work instead of them. At the same time, people are often uncomfortable giving up control and letting AI make decisions for them. That’s why they want suggestions that AI makes to be explainable so that they understand how AI got there.

This is relevant not only to DQ and anomaly detection but also to topics of ethical AI. For example, to prevent discrimination against certain groups of loan applicants (in banking). By having transparency in AI’s decision-making, algorithms can be updated to be more “ethical.”

Ataccama makes detecting anomalies easier

In summary, anomaly detection methods and algorithms allow you to spot unwanted or unexpected values in your data without having to specify rules and standards. It takes a snapshot of your datasets and detects anomalies by comparing new data to the patterns it has found in the past about the same or similar datasets.

As far as expectations for what an AI anomaly detection tool can do:

- Whether these anomalies occur at a high level (like with metadata) or close to the actual data itself (like with record-level anomalies), your anomaly detection service needs to be able to spot them.

- Both time-dependent and time-independent anomaly detection are necessary for it to apply to all types of data.

- Your service also must be able to handle diverse data types, be easily usable and adaptable, and have explanations available when it flags values as anomalous.

The field of anomaly detection continues to grow and evolve. AI and machine learning are becoming more widely adopted and implemented across the data management space. We can expect anomaly detection to become increasingly proactive, not reactive. These tools will be able to spot problematic data before it enters downstream systems where it can cause harm.

Anomaly detection is valuable because it usually reveals an underlying problem beyond the data, such as defective machines in IoT devices, hacking attempts in networks, infrastructure failures in data merging, or inaccurate medical checks. These issues are often difficult to anticipate and, consequently, write DQ rules for. Therefore, AI/ML-based anomaly detection is the best method for spotting them.

Now that you know how valuable anomaly detection is, you’re probably eager to implement it for your data management initiative. Ataccama ONE has anomaly detection built into our data catalog software so you can organize your data and spot anomalies all in the same place. Learn more on the platform page!

Check out our Data Catalog Software today or learn more about what data cataloging is with the help of our ultimate guide!