Trust your data,

accelerate your AI

Scalable data management for better AI and business outcomes.

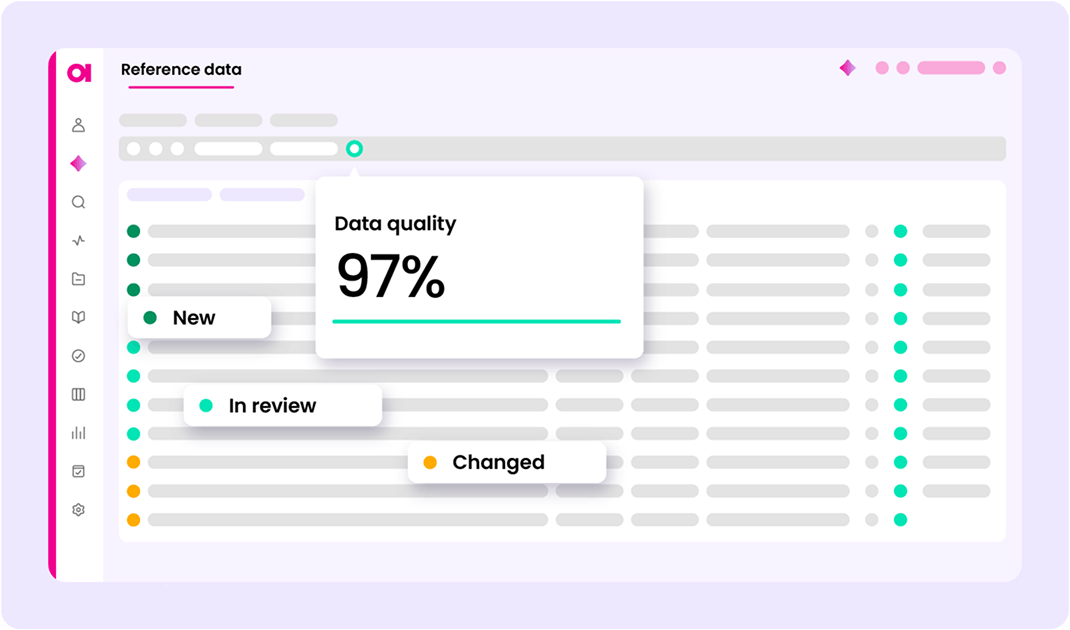

Data quality, observability, catalog, and lineage with a built-in

AI Agent to streamline your stack and speed impact.

Data Observability now available

Data Trust can break in motion. Now you can detect, triage, and remediate issues before there’s any downstream impact to the business and strategic AI initiatives.

The modern AI stack:

A blueprint for

trusted agentic AI

The 5-layer modern AI Stack from Ataccama, Snowflake, and Deloitte. The architecture that separates enterprises scaling agentic AI reliably from those stuck in proof-of-concept

2026 Gartner Market Guide for Data Observability Tools

Access the report for insights into how the data observability landscape has shifted, and why Gartner named Ataccama a representative vendor

From messy data silos to data products that drive growth

Scale data management for the AI era

Manual processes and overloaded data teams won't work anymore. Extend your team with our AI Agent, your digital data steward. Federate data quality into the business with agility, not headcount.

Stay audit-ready, always

Act on data quality issues before regulators come knocking. Turn compliance from a recurring fire drill into a lasting foundation.

Data modernization

Maximize your Snowflake or Databricks investment and minimize rework by bringing trusted data with you. Start clean, stay clean.

Make your data AI-ready

Your AI is only as good as the data behind it. Ensure every dataset is verified and trusted so your models and agents deliver reliable outcomes.

Our customers drive impact

"As part of the journey we've gone through with Ataccama, we're becoming more data‑first, moving from assumption to assurance around data quality."

"Other vendors are transactional in their behavior. With Ataccama, there's a genuine belief of shared responsibility of success that we feel within T-Mobile."

"With Ataccama, leadership trusts our data and knows our decisions are defensible. Data quality is foundational to how we operate and has helped us drive $30-40 million in value."

The data trust layer for your modern AI stack

Data Trust Index scores your data. MCP connects it to your AI stack.

Applications

Value realizationThis is the layer enterprises actually feel. Agents don't surface outputs for human review here, they execute inside operational systems. Refunds are issued. Compliance escalations are filed. Service requests are routed and resolved, all at machine speed.

What lives here:- Autonomous AI agents built on platforms like Agentforce, ServiceNow, and Ironclad

- Copilots powered by ChatGPT, Microsoft Copilot, and Power BI

- Chatbots and self-service applications like Fin AI and Sierra

- Custom-built AI apps using Cursor, Replit, and Bolt

The foundation decides the outcome

The quality of everything below this layer determines whether this layer is an asset or a liability. Agents can only be trusted to act autonomously when the data they act on has been validated, certified, and cleared, before execution, not after.

Agent orchestration

Reasoning & planningThis is where large language models, agent frameworks, and retrieval systems take trusted data and turn it into action. Agents reason over enterprise context, plan sequences of steps, and coordinate execution across systems, without a human authorizing each move.

What lives here:- Agent frameworks like LangChain, CrewAI, AutoGen, and LlamaIndex

- Foundational models including GPT, Gemini, Claude, Llama, and others

- Vector stores for retrieval-augmented generation

- MCP as the interface connecting agents to governed data sources and tools

Probabilistic reasoning needs a deterministic foundation

LLMs are powerful precisely because they handle ambiguity well. But when agents trigger financial transactions or compliance actions, that same flexibility becomes a risk. Orchestration can't resolve data conflicts on its own, it needs certified, governed context passed up from the layer below.

Data trust layer

Context & quality

Most AI initiatives have Layers 1, 2, 4, and 5. What they're missing is this one. The Data trust layer sits between consolidated data and the systems that act on it, validating, governing, resolving, and certifying data before any agent is permitted to execute against it.

What lives here:- Data quality validation and automated remediation at the source

- Entity resolution that produces a single authoritative master record

- Data governance including catalog, lineage, and business definitions

- Continuous observability that detects anomalies as they emerge

- The Data Trust Index: a real-time, machine-readable signal that tells agents whether a dataset is cleared for autonomous action

Available data isn't trusted data

The gap between available and trusted is exactly where agentic AI breaks down. Data that loads without errors and passes schema checks can still carry duplicates, stale values, and missing context. This layer closes that gap, continuously, across the entire data estate. Ataccama ONE delivers this layer as a unified platform, combining data quality, observability, governance, lineage and reference & master into a single environment purpose-built for trusted agentic AI.

Cloud lake houses

Storage & computeBefore AI can operate at scale, enterprise data needs to live in one place. Cloud lakehouses consolidate fragmented source data into a scalable, compute-capable environment built for modern AI workloads, eliminating the infrastructure barriers that make coordinated AI deployment nearly impossible.

What lives here:- Cloud data platforms like Snowflake, Databricks, Amazon Redshift, and Google BigQuery

- Native Python execution for data science and ML pipelines

- Open table formats like Apache Iceberg for cross-platform flexibility

Consolidation solves infrastructure. It doesn't solve quality

Centralizing data is the necessary first move, and the return is substantial. But migrating data to a modern cloud platform doesn't clean it, resolve duplicate entities, or certify it for AI use. That work happens in the layer above.

Data sources

Raw dataEvery enterprise AI program starts here, with the operational systems that run the business. ERP platforms, CRMs, billing systems, document repositories, and data feeds that were built for process efficiency, not AI consumption. The data is there. The problem is what it carries.

What lives here:- Structured sources like SAP, Workday, Oracle, Salesforce, NetSuite, PostgreSQL

- Unstructured sources like Slack, Gmail, SharePoint, Notion, Google Drive

- Operational and transactional feeds across business functions

Years of quality debt come with the territory

Source systems accumulate inconsistent entity definitions, duplicate records, incomplete fields, and business logic that varies by region, system vintage, or the migration that last touched them. That's not an exception at enterprise scale. It's the expected condition of raw data, and it's exactly what the layers above are designed to address.

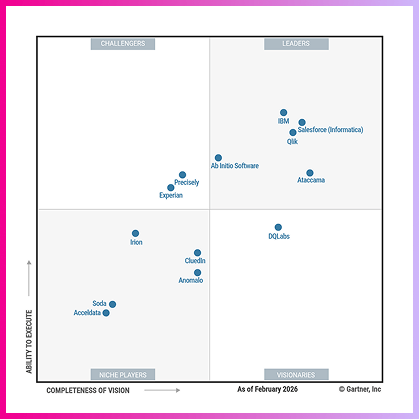

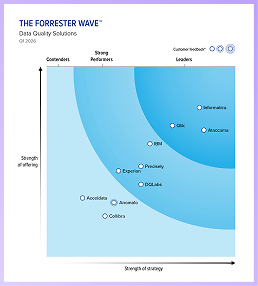

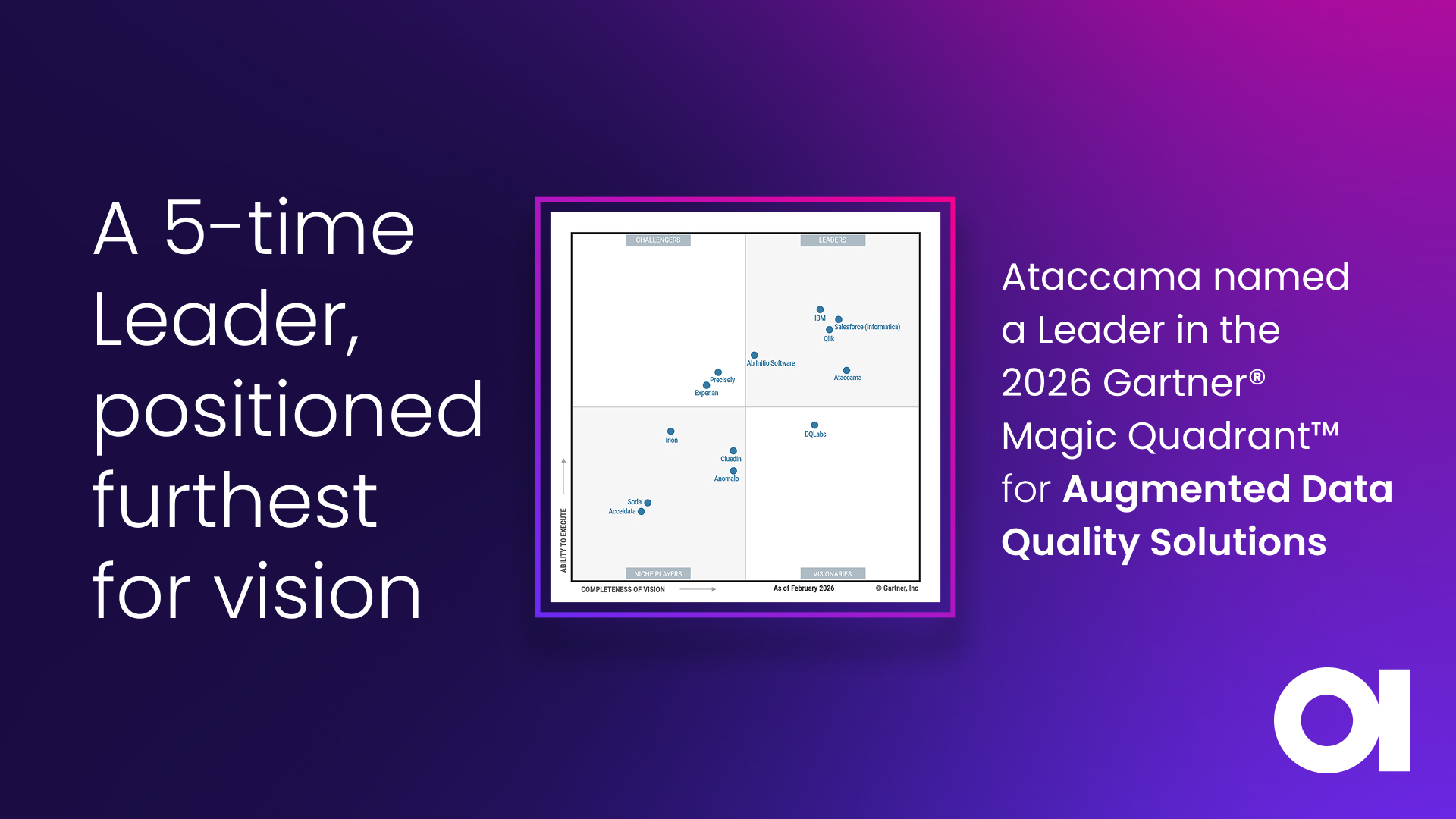

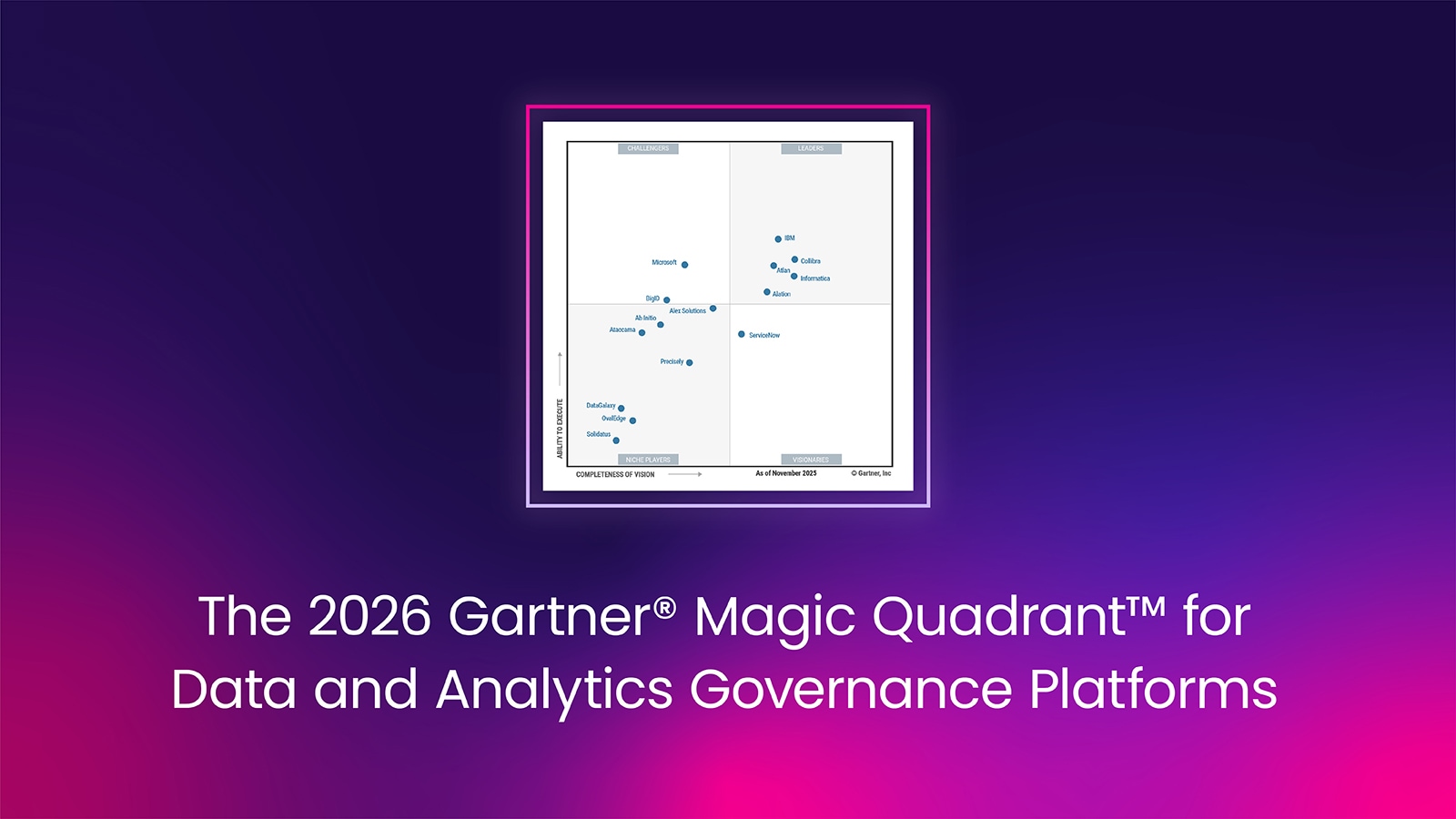

The validated leader in Data Quality.

The strongest product vision.

Different research firms. Same conclusion.

A 5-time Leader in the Gartner® Magic Quadrant™

in the Augmented Data Quality Solutions Magic Quadrant