Market-leading data quality, powered by AI

Elevate data quality with AI-powered automation.

Deliver trusted, compliant data for reporting, AI, and business

initiatives — at any scale, in any environment.

Drive business outcomes with trusted data

Make your data AI-ready

Go beyond checking data quality — actively improve it. Trusted, high-quality data fuels accurate AI models and reliable analytics for smarter decisions and innovation.

Mitigate regulatory & security risks

Achieve complete, trusted, audit-ready data to mitigate penalty risks. Ensure compliance with industry regulations like Basel, Solvency, Dodd-Frank Act and others.

Reduce costs & improve efficiency

Cut down manual work and repetitive fixes with AI-driven automation. Increase data team efficiency by up to 50% while lowering operational costs.

Improve customer lifetime value

Deliver trusted data for campaigns, reports, and apps that drive personalized experiences. Keep customers engaged, satisfied, and coming back.

Grow market share & drive revenue

Move faster with clean, reliable data. Improve forecasting, pricing strategies, and drive product innovation to power revenue growth.

The most complete data quality solution with unbeatable time to value

AI-powered automation

Leverage machine learning, GenAI, and AI agent to accelerate your data quality efforts. Free your teams from manual tasks and empower them to work more efficiently.

Complete data quality lifecycle

Find and fix data issues automatically with built-in validation, cleansing, and remediation so your data is clean, trusted, and ready for use.

Scalable processing & deployment flexibility

Process data with edge and pushdown processing, achieving peak performance and security. Deploy in any cloud or on-premise environment.

Unified data trust platform

Access data catalog, data quality, observability, and lineage — natively built in ONE platform — to provide a smooth experience and deliver data trust across your entire landscape.

Empower your business with end-to-end data quality

Discover how we bring together AI and automation to provide end-to-end data quality across your entire data ecosystem.

Explore & understand

Start your data quality journey with clear insights from day one. Connect to any source, assess data quality instantly, and enable smart anomaly detection for real-time alerts on unexpected patterns.

-

Out-of-the-box data quality validation

Our system automatically validates data quality — no custom rules required. In minutes, you’ll see what data exists, which sources matter, and how clean they are.

-

Data profiling

Our system profiles critical datasets and automatically generates distributions, pattern analyses and many other insights. This way you can quickly identify issues and create targeted data quality rules.

-

Anomaly detection and time series analysis

The system automatically flags unexpected spikes and drops that traditional data quality rules might miss. It monitors transaction data — such as orders, payments, shipments — to detect suspicious events and resolve issues.

Define & monitor

Setting up your data quality program is easier and faster than ever, thanks to AI. Define rules for key datasets and KPIs. Monitor data quality trends over time, and data consistency during migrations. Get alerts for anomalies or data quality drops — so you’re always ready to act.

-

AI-powered rule creation

Just write a prompt and GEN AI will generate data quality rules and test data to validate them. The AI Agent automates bulk rule creation and mapping. Save hours, skip the manual work, and get data quality rules faster.

-

Data quality evaluation

Build simple or more complex rules using reference data, Loqate for address checks, or external sources via API. Once set, run evaluation across your systems and get a clear view of your data quality.

-

Data quality monitoring & reporting

Automate data quality monitoring to track data quality trends over time. View reports directly in the platform or integrate data quality metrics into Tableau or Power BI via API for tailored enterprise analytics.

-

Reconciliation checks

Ensure data consistency during migrations. Map, compare, and align source and target tables with ease — catching mismatches early and keeping your systems in sync.

Improve quality

Once you’ve assessed data quality and identified issues, it’s time to act. Automate remediation with powerful transformation plans that cleanse and standardize data at scale. Fix any remaining issues manually. Deliver clean, trusted data that’s ready for use.

-

Automated standardization & cleansing

Standardize values — like country codes, phone numbers, and SKUs — and fix common cleansing issues. Ensure clean data flows into every system with no-code automated transformation plans.

-

Automated data enrichment

Enhance your data with third-party records. Enrich customer, company, and location records using latest, verified data from postal services, carriers, credit bureaus, and more. All automated for maximum reliability.

-

Record-level issue remediation

Resolve the data quality issues directly in the platform, or export them to Jira, ServiceNow, or other systems via API to fit your existing workflows.

Prevent issues

Once you define your data quality rules, you can reuse them anywhere. Business teams create the rules, and data engineers can easily plug them into pipelines or applications — stopping bad data before it spreads. It’s seamless teamwork for cleaner data.

-

Centralized, reusable rules library

Store all your data quality rules in one place and reuse them anywhere. Ataccama spots similar rules, prevents duplicates, and manages versions and approvals. Update a rule once, and it syncs across your pipelines automatically. Smart, centralized, and always up to date.

-

Developer-ready SDKs

Give data engineers the tools to add data quality checks right into apps and pipelines. Expose rules as SQL functions in Snowflake for easy integration with dbt pipelines. Or access data quality validations via REST or GRAPHQL APIs for smooth connection to external systems.

-

Scalable deployments

Deploy on-premise, in the cloud, or hybrid environments, scaling freely as your needs evolve

-

Reusable configurations

Save time by reusing DQ configurations across different environments and technologies.

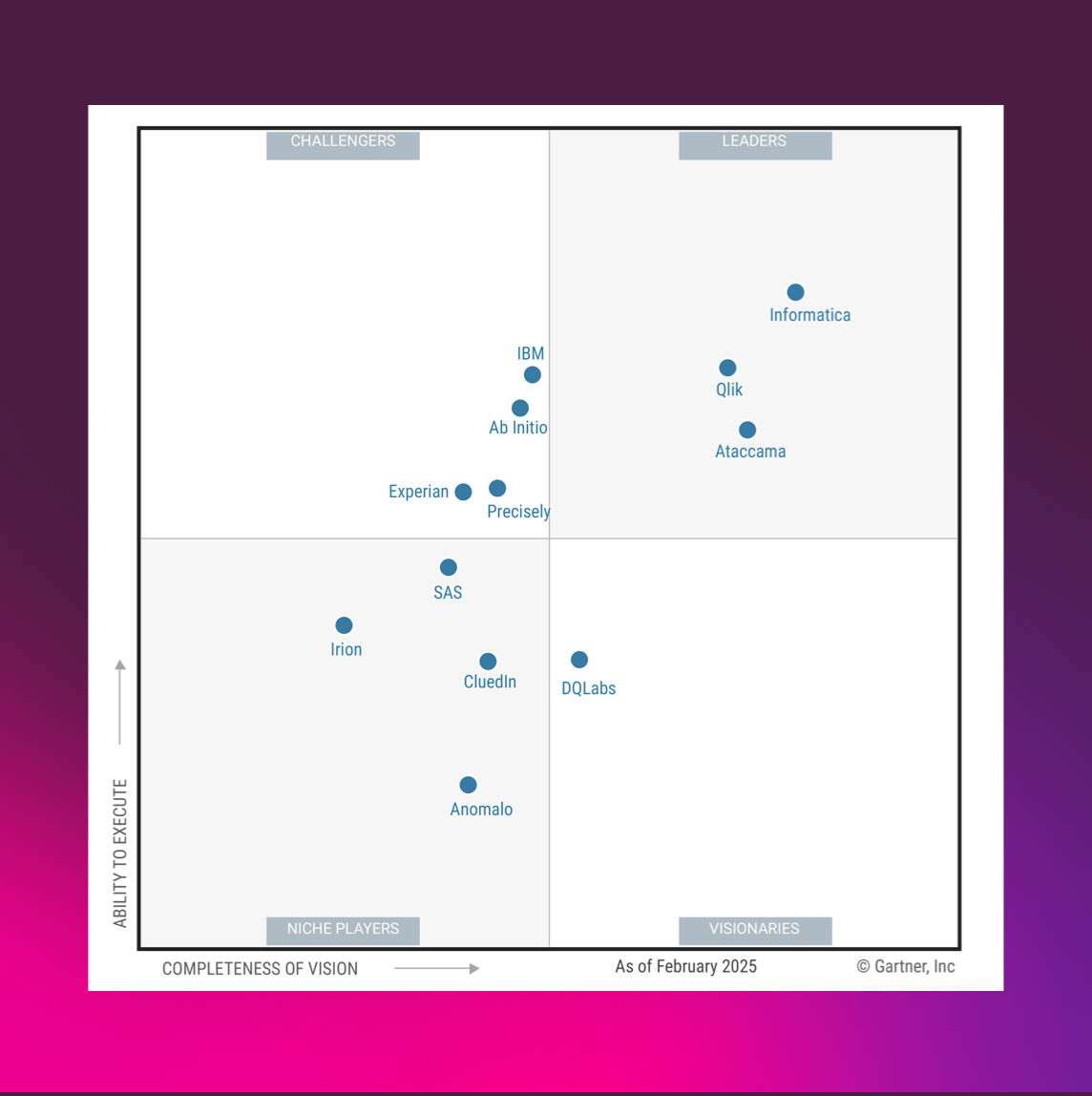

A Leader in The 2025 Gartner® Magic Quadrant™ for Augmented Data Quality Solutions

Ataccama positioned as a Leader in the 2025 Gartner® Magic Quadrant™ for Augmented Data Quality Solutions for 4th consecutive time

Experience the Ataccama difference

Stronger data initiatives

with improved business outcomes

management processes

in the first 3 years

Data quality software trusted by users and

analysts alike

in action Get a personalized demo of Ataccama ONE Data Quality

from our experts

Discover practical use cases

See how Ataccama ONE Data Quality can address your specific business needs.

Tailored for your industry

Learn how businesses in your industry are leveraging Ataccama for success.